Companies have grounded their corporate image around the concept of pervasive quality and dependability arguably from the origins of advertising. Delivering good quality and dependability does require a pervasive approach, with universal processes, culture and data. It’s no wonder that one of the most frequent questions is “What system should own our quality data?”

In a quality version of the Garden of Eden, all systems would interoperate to seamlessly support processes, people would work together to advance good quality practices, and accurate and meaningful information would be available when needed. Just bask in the heavenly glow for a moment. Sadly, today’s grimy reality is often far from this utopian vision. On the data and IT side alone, quality leaders and professionals work with a dizzying array of enterprise and desktop systems that vary by function and site.

To the Rich Go the Spoils

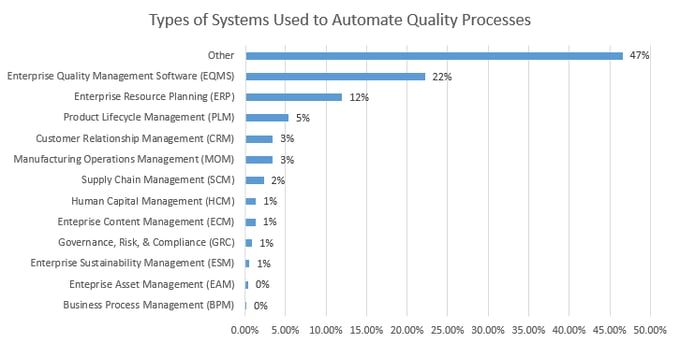

Note the large other bar in the graph, as well as the large number of enterprise platforms, are engaged in quality. Unlike ERP for accounting or PLM for engineering, there is often no integral quality data model. Why? Quality is spread across functions and sites like peanut butter. It is pervasive, so it has a role in marketing and requirements, development, test, sourcing, manufacturing, service, and so on. Of course, different functions select – and pay for - systems based on their requirements, and these systems work primarily for the benefit of the functions. However, quality must work with all of these systems because quality touches all functions.

In a nutshell, without some investment in consolidating the data model, quality will have to glue its data extracts together to generate what is often a low resolution and still-frame picture of quality.

Let’s say that a quality manager wants to prioritize CAPAs based on risk, per ISO 9001:2015. This requires some knowledge of Severity and Occurrence. In order to do a defensible job, the quality manager must capture complaints from the CRM, inspections and Non-Conformances from multiple Manufacturing Operations Management (MOM) (likely by site), supplier data for SCARs from the appropriate systems, quality data captured in EQMS, etc. In order to understand occurrence, it can be critical to understand production volume in order to calculate rates, again dipping into ERP, MOM, or other.

Well That Isn’t Right, But What Is?

An important concept is that quality is not and cannot be a single system due to its pervasive nature. The data should be interconnected in a system of systems that provides appropriate quality information accessibility at the plant, business unit, and corporate levels. Here are some general guidelines to improve this accessibility.

Sell Quality to the Functions

As a quality leader, be an active, enthusiastic and supportive member of enterprise software selections in the most important tangential functions. Unless quality has deep pockets and can afford to pay to play, sell the value of quality to get quality requirements in the core requirement list. Enterprise systems notoriously overrun initial services estimates. Quality leaders that take frontrunner roles in, say, PLM and ERP selections and deployments guide those deployments to ensure that quality needs are met. Those that don’t often are afterthoughts.

Map Data Traceability and Ownership

When building the interconnected system of systems, here’s a critical question: Which system should be deemed to be the master of specific data in order to promote maximum quality? Answering this question in detail can help generate a clear roadmap. There may not necessarily be a need to remove quality data or processes from existing systems, or replace existing systems, as much as to define which systems become the data master and ensure that the other systems can gain visibility and traceability to the master data. This way core quality processes function effectively across multiple systems with clear data ownership and traceability rules.

Consider Use Scenarios

Don’t forget the importance of ease of use while making determinations of traceability and ownership. One important consideration to the ease of use of deployed systems is capturing use scenarios and streamlining the user experience in these scenarios. For instance, requiring a casual user to switch back and forth between multiple systems while completing a process can result in user frustration and poor adoption. Make sure that the user experience doesn’t undermine the desired goal of corporate, business unit and plant-wide information accessibility.

World-class quality management is pervasive across the organization, but this in turn creates a real systems challenge! By including the concepts above in discussions about data management, quality leaders can make meaningful steps towards a unified quality model.

Gain access to the LNS Research Library right now by taking the newly released Metrics That Matter survey!