Recently, I noticed an interesting data point from our Analytics That Matter research survey: 58% of surveyed companies claimed to be implementing or piloting an analytics program, but only 11% are seeing dramatic business impacts. The reason? I believe that while these programs are often led by the right vision, most of them aren’t necessarily supported by the required execution.

Some of these missteps in executing industrial analytics have caused manufacturing companies to overestimate their analytics maturity- an overestimation unrealized until they eventually face an obstacle in later stages that forces them to come to a halt and work their way back, resulting in stagnant or no value to business operations.

In this blog post, we will use data points from a recent LNS Research survey and maturity model* to highlight some of the mistakes companies are likely to make in their analytics journey and provide actionable recommendations.

Recommendation #1: Align transformation goals and metrics to strategic business objectives

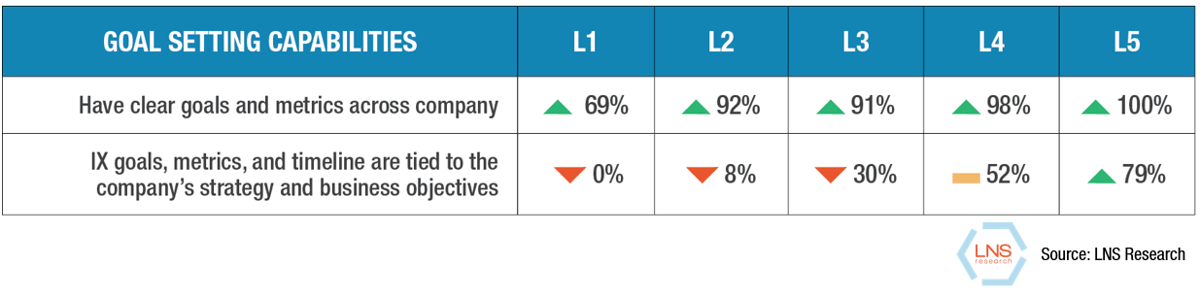

Every transformation program requires identifying goals and reporting on metrics to support and monitor progress against those said goals. The LNS Research maturity analysis reflects that most of the companies that took the survey got this right: more than two-thirds of companies, regardless of maturity level, said they have clear goals and metrics and are reporting on these metrics consistently.

However, where companies fall short is in tying these goals and metrics to the company’s strategy and overall business objectives. Failing to do this creates misalignment among the analytics use cases, existing programs, and the vision set by the leadership team. Analytics use cases should be an embedded part of existing management systems and strategic initiatives, and not be implemented as yet another separate program.

Recommendation #2: Don't focus on analytics before working on data

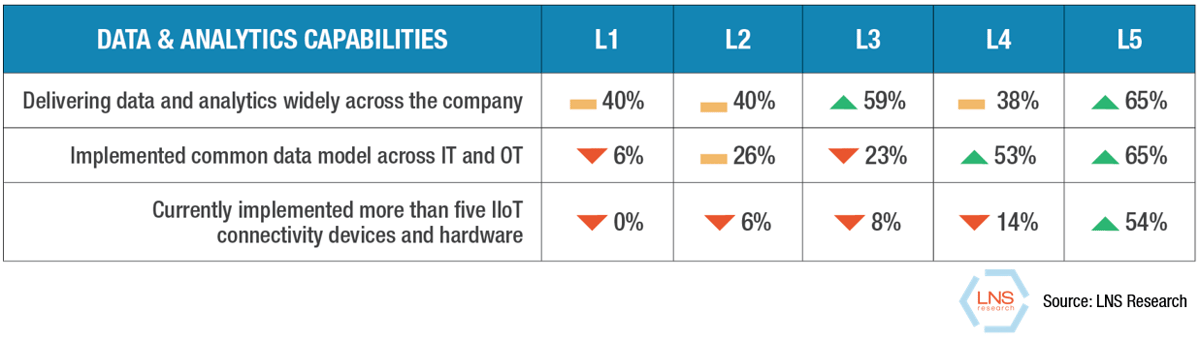

This is the most common misstep we see companies make in their analytics journey. While everyone is excited about working on analytics, working on data doesn’t quite pique the same level of interest. By working on data, I mean making sure the right data is available to the right people, in the right format, at the right time. This includes several steps like developing robust machine connectivity, implementing a common data model or data hub, and creating several data custodian roles to manage data access and quality.

The above table reflects that: while 40% of companies are delivering data and analytics widely across the company as early as in the first maturity level, the numbers for the other data connectivity and modeling best practices don’t tell the same story.

Recommendation #3: Predictive analytics is not the beginning or the end

Predictive analytics use cases such as predicting machine failure, quality defects, supply-demand fluctuation have been some of the most sought-after use cases by industrial companies. However, I believe there are two missteps companies make here:

-

-

They either begin their analytics journey with predictive use cases without the required foundational capabilities.

-

Or, they don’t go beyond predictive analytics.

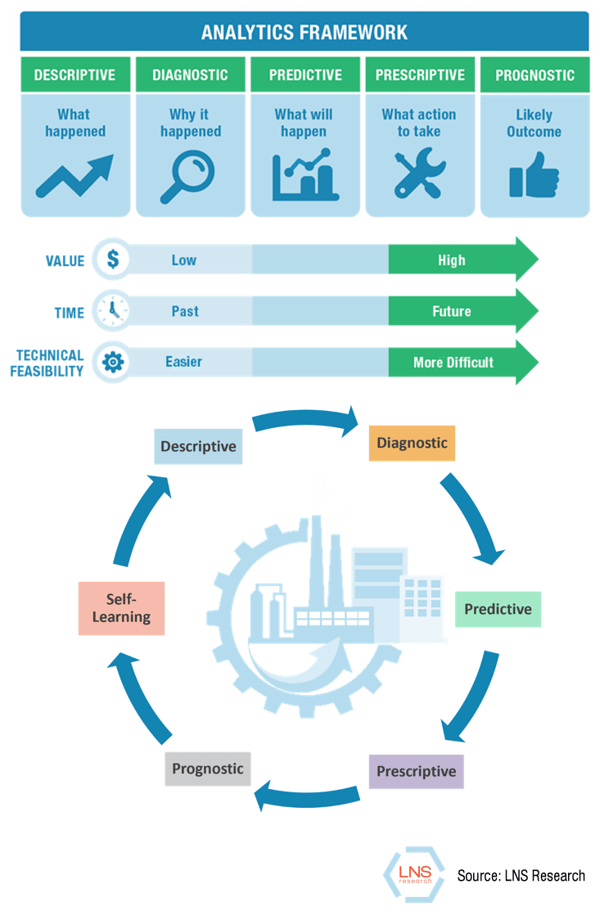

LNS Research’s analytics framework places predictive analytics as the midpoint of an analytics journey - not the beginning or the end. Ideally, companies should first implement the required data capabilities mentioned above, and begin their analytics journey with basic descriptive and diagnostic capabilities. There is immense value to be realized from just providing data and some basic analytics to the right people at the right time - capitalize on that. Predictive use cases should be a second or third step in analytics.

Companies that have predictive analytics should then move on to prescriptive and prognostic analytics. For instance, once you predict that an asset’s vibration readings are trending up and are likely to fail, prescriptive analytics provides necessary actions to prevent the failure, and prognostics takes it a step further by showing what the likely outcomes are.

Companies that have predictive analytics should then move on to prescriptive and prognostic analytics. For instance, once you predict that an asset’s vibration readings are trending up and are likely to fail, prescriptive analytics provides necessary actions to prevent the failure, and prognostics takes it a step further by showing what the likely outcomes are.

However, a critical step here is to close the loop by including the predictions, prescriptions, and prognosis as part of a self-learning system that is used to retrain the model. This self-learning step ensures a closed-loop system and increases the overall analytics model’s accuracy.

Based on these recommendations, LNS Research would advise today’s industrial leaders undergoing any kind of transformation initiative to reassess their analytics program’s current state and address any gaps.

*The LNS Research maturity model classifies companies into one of five maturity levels (level-1 being the least mature) based on the adoption of best-practice capabilities. The numbers shown in the above tables represent adoption rates of corresponding best-practice capabilities at each maturity level.

All entries in this Industrial Transformation blog represent the opinions of the authors based on their industry experience and their view of the information collected using the methods described in our Research Integrity. All product and company names are trademarks™ or registered® trademarks of their respective holders. Use of them does not imply any affiliation with or endorsement by them.