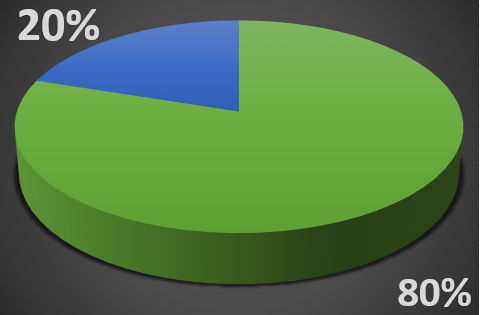

One of the business rules people often espouse, particularly when discussing quality related issues is Pareto’s Principle. It is also known as the 80/20 rule and simply reflects that in many cases 80% of the effects come from 20% of the causes. The principle has been observed to be more-or-less true in a large number of disciplines from natural sciences (Pareto himself observed that 80% of the peas in his garden came from 20% of the pods), to safety (20% of the hazards frequently account for 80% of the injuries), to software (20% of the bugs account for 80% of the crashes). It turns out that in general, quality often adheres to this basic tenant, as well. Understanding how it impacts quality in your enterprise will go a long way in helping you to focus in on the vital few. In fact, the principle itself is also called the “law of the vital few.”

One of the business rules people often espouse, particularly when discussing quality related issues is Pareto’s Principle. It is also known as the 80/20 rule and simply reflects that in many cases 80% of the effects come from 20% of the causes. The principle has been observed to be more-or-less true in a large number of disciplines from natural sciences (Pareto himself observed that 80% of the peas in his garden came from 20% of the pods), to safety (20% of the hazards frequently account for 80% of the injuries), to software (20% of the bugs account for 80% of the crashes). It turns out that in general, quality often adheres to this basic tenant, as well. Understanding how it impacts quality in your enterprise will go a long way in helping you to focus in on the vital few. In fact, the principle itself is also called the “law of the vital few.”

The Pareto chart is a commonly used tool in identifying quality issues, and is used to determine what quality problems to tackle first to improve your operations. There is another way to apply the 80/20 rule in quality that concerns the technology you deploy to aid in managing quality processes, and the data you need to collect to achieve your quality objectives.

The Big Data Conundrum

As computing power keeps increasing and the Industrial Internet of Things (IIoT) matures, it will unlock an exponentially increasing amount of data. This data is about our manufacturing processes and Cloud services that give us a place to aggregate that data and apply powerful analytics against it. The temptation is there to collect and analyze everything, since the cost is minimal. This flies in the face of traditional quality thinking related to focusing on the vital few. Much of the research around how Big Data & Analytics will impact the 80/20 principle has been focused on the customer relationship and marketing functions. Very little has been done to reconcile quality principles with Big Data. At LNS Research our take is that the two areas are not incompatible but that you do need to understand when to apply Big Data & Analytics in the Quality process.

Using Traditional Tools to Identify the Vital Few

The Pareto chart is one of the seven basic tools of quality, first described by Joseph M. Juran. It is used to identify the most common defects, the sources of those defects or the most common customer complaints. Once the quality issue has been identified, quality practice dictates you to use other tools. This is like a cause and effect to start identifying the root cause of the problem, which is creating the defect or customer issue. This is where Big Data & Analytics can enter into the picture. With the complexity of many processes today, the impact of previously unaccounted for variables, can be assessed using Big Data & Analytics and correlated to understand their contribution to a quality problem. This could fall into areas such as weather conditions or the impact they might have on raw materials, like in the food and beverage industries.

Where Big Data & Analytics Can Aid in Quality Issue Determination

One area where Big Data & Analytics has delivered high benefits is already in customer satisfaction assessment. By applying Big Data & Analytics to social media feeds, previously undiscovered quality issues that may not have been reported via normal channels can be discovered. In today’s world, there is the propensity to vent on social media, rather than complain directly to the manufacturer when a product fails to deliver the expected value. By mining this social data, heretofore-unknown problems may be discovered and then traditional quality tools can be used to further refine the problem.

Applying the 80/20 Rule to Quality Application Deployment

Another place the 80/20 rule seems to apply in the Enterprise Quality Management System (EQMS) space is in the deployment of quality technology and tools. It turns out that the Pareto Principle also can apply to the technology we deploy in our operations. It applies in other application or business process areas, such as Asset Performance Management (APM) or Environment, Health & Safety (EHS). In most situations 80% of the activity in a given business process area can be handled by 20% of the applications that have been deployed to support a given process. This means that if there are five primary tools that are used in a business, one of them can most likely address 80% of all the interactions that need to occur to manage the process. Ensuring your EQMS fits the bill will go a long way to making it that one tool that sits at the center of your Quality program. For more on how Quality tools fit into your Enterprise Architecture see the blog post by my colleague and LNS Research co-founder Matt Littlefield.

Understand the capabilities of twenty of the leading vendors in the APM space by downloading our APM Solutions Section Guide. The guide contains comparison charts for the factors listed above and the detailed profiles of the twenty vendors ranging from automation companies, to enterprise software providers and includes many specialized APM solutions as well.